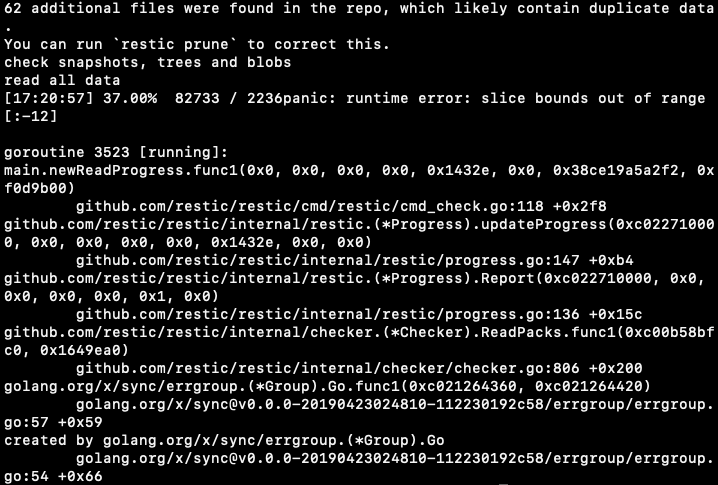

Hmm, I’m using one of the beta versions (v0.9.6-353-gfa135f72). Wonder if this isn’t a bug? A normal restic check finishes just fine, no errors minus duplicate files.

Going to try it again with v0.9.6-364-gb1b3f1ec and then stable if that doesn’t work.