Sure thing. Copied my latest log folder out, and ran some more tests:

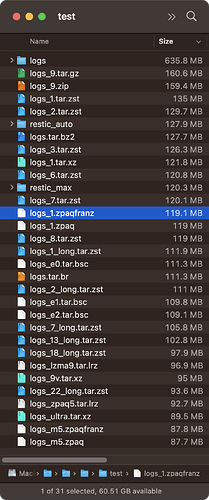

Sample of results near zpaqfranz -m1, sorted larger to smaller:

restic_auto, 3.600s, 127.9MB

xz_1, 15.271s, 121.8MB

zstd_6, 2.198s, 120.8MB

restic_max, 6.721s, 120.3MB

zstd_7, 2.820s, 120.1MB

zpaqfranz_1, 9.016s, 119.1MB

zpaq_1, 7.743s, 119MB

zstd_8, 3.223s, 119MB

zstd_1_long, 2.318s, 111.9MB

bsc_e0, 34.241s, 111.3MB

zstd_2_long, 2.343s, 111MB

zstd_7_long, 2.934s, 105.8MB

zstd_13_long, 7.861s, 102.8MB

zstd_18_long, 62.84, 97.9MB

Sample of results near zpaqfranz -m1, sorted slower to faster:

zstd_18_long, 62.84s, 97.9MB

bsc_e0, 34.241s, 111.3MB

xz_1, 15.271s, 121.8MB

zpaqfranz_1, 9.016s, 119.1MB

zstd_13_long, 7.861s, 102.8MB

zpaq_1, 7.743s, 119MB

restic_max, 6.721s, 120.3MB

restic_auto, 3.600s, 127.9MB

zstd_8, 3.223s, 119MB

zstd_7_long, 2.934s, 105.8MB

zstd_7, 2.820s, 120.1MB

zstd_2_long, 2.343s, 111MB

zstd_1_long, 2.318s, 111.9MB

zstd_6, 2.198s, 120.8MB

Zpaqfranz seemed fast, though not as fast as Zstandard, of course. Interestingly enough, also not quite as fast or efficient as regular Zpaq, either.

I’m not a huge Facebook fan, but the one good thing that came out of Meta was Zstandard. So fast and efficient. Glad that’s what Restic decided to use!

![]() thanks everyone who worked on this, especially @MichaelEischer!!

thanks everyone who worked on this, especially @MichaelEischer!!